- Home

- About Us

- Students

- Academics

-

Faculty

- Electrical Engineering

- Automation

- Computer Science & Engineering

- Electronic Engineering

- Instrument Science and Engineering

- Micro-Nano Electronics

- School of Software

- Academy of Information Technology and Electrical Engineering

- School of Cyber Security

- Electrical and Electronic Experimental Teaching Center

- Center for Advanced Electronic Materials and Devices

- Cooperative Medianet Innovation Center

- Alumni

-

Positions

-

Forum

News

- · Shanghai Jiao Tong University professors Lian Yong and Wang Guoxing's team have made remarkable progress in the field of high-efficiency pulse neural network accelerator chips.

- · AI + Urban Science research by AI Institute was selected as cover story in Nature Computational Science!

- · The first time in Asia! IPADS's Microkernel Operating System Research Wins the Best Paper Award at SOSP 2023

- · Delegation from the Institution of Engineering and Technology Visits the School of Electronic Information and Electrical Engineering for Journal Collaboration

- · Associate professor Liangjun Lu and research fellow Jiangbing Du from Shanghai Jiao Tong University made important advancements on large capacity and low power consumption data transmission

NeuroFluid — A new AI-based simulation paradigm for computational fluid dynamics by Xiaokang Yang’s Group

Over the decades, computational fluid dynamics has been a fundamental research field at the intersection of computer science and physics. One may describe the fluid dynamics by traditional physics theories such as Navier-Stokes equations, and then solve the equations with numerical methods. However, it is non-trivial to describe complex fluid systems (such as turbulence) where the underlying physical processes have not been fully discovered.

But a new paper from the AI+Sceience group at SJTU shows the power of leveraging deep learning and computer vision approaches, perhaps pointing a way forward for the field. The team, led by Xiaokang Yang and Yunbo Wang, professors at SJTU’s AI Institute, created a computer program called NeuroFluid that learns about the physical world of fluids (albeit a simplified version) by solving the inverse problem of fluid rendering.

The paper, NeuroFluid: Fluid Dynamics Grounding with Particle-Driven Neural Radiance Fields, was accepted by ICML, which is a leading academic conference in machine learning with an acceptance rate of 21.9% in 2022.

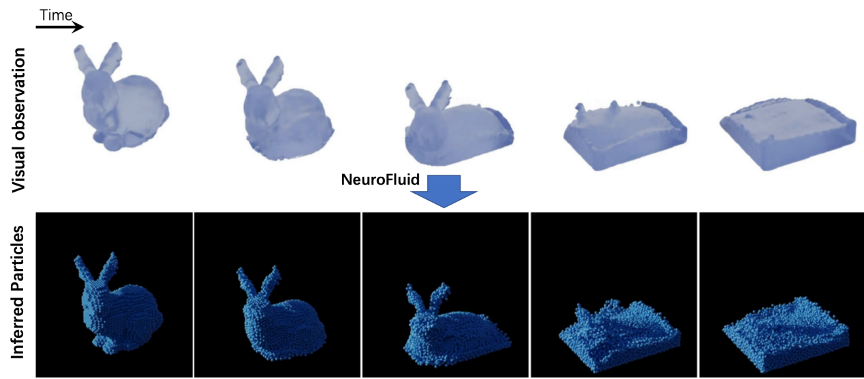

Fig.1 NeuroFluid can infer the fluid dynamics from the observed video.

NeuroFluid uses the Lagrangian description for fluids and models the system with a large set of particles. What is new in NeuroFluid is that it reasons about the particle position and velocity purely from visual observations. In contrast, existing learning-based approaches require ground-truth particle information as the training supervision. In real-world scenarios, however, these requirements are hard to meet.

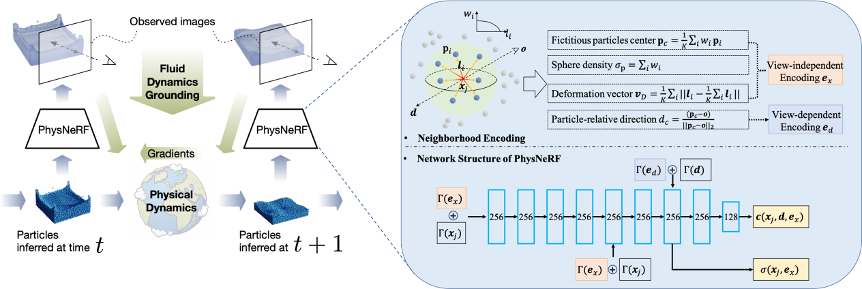

NeuroFluid is a neural network trained on a series of visual observations of fluids. Fig.2 consists of two pieces, a particle transition model and a particle-driven neural renderer (termed PhysNeRF). The optimization process of NeuroFluid includes three stages:

(1) Simulation: The particle transition model predicts the future trajectories of fluid particles, starting from the estimated initial states of the particles.

(2) Rendering (Fig. 2 Right): PhysNeRF renders the simulated particles according to their geometry properties.

(3) Causal inference: NeuroFluid computes the rendering loss between rendered images and observed images, and then back-propagates the gradients to optimize the fluid dynamics.

Fig. 2 Left: the overview of NeuroFluid. Right: the rendering process of PhysNeRF.

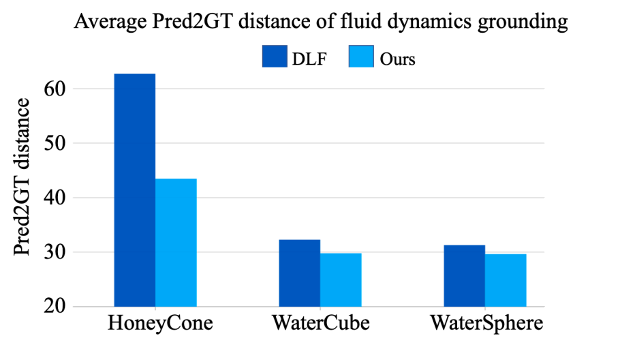

The researchers validated the effectiveness of NeuroFluid from four aspects: the accuracy of fluid dynamics grounding and future prediction, the performance of the renderer for novel view synthesis and its generalization ability to unknown fluid scenes. Three fluid benchmarks (i.e., HoneyCone, WaterCube, and WaterSphere) are used in the experiments, which have different initial fluid shapes (e.g. cone, cube and sphere) and physical materials (e.g., honey and water).

(1) Fluid dynamics grounding accuracy.

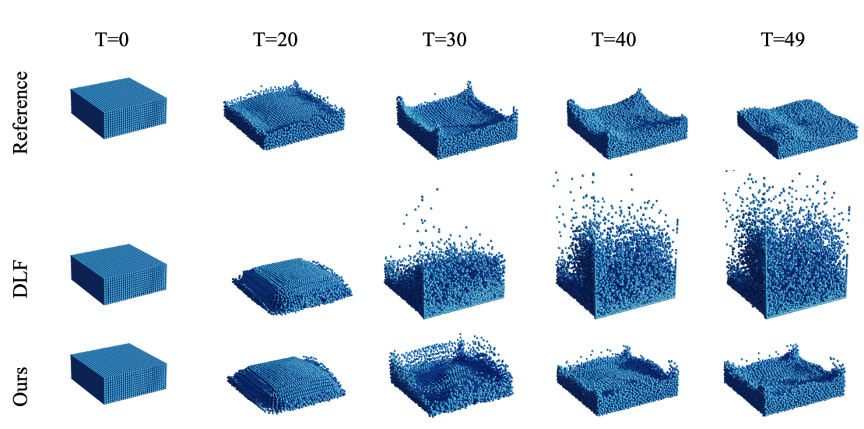

Existing learning-based fluid simulator, such as DLF[1] uses the ground-truth particle position and velocity as the training supervision. NeuroFluid is compared with these approaches without access to ground-truth particles, which is obviously more challenging. As shown in Fig. 3, the inferred particles by NeuroFluid represent more reasonable dynamics patterns.

Fig.3 Results of fluid dynamics grounding on WaterCube. Row 1: The ground-truth particles. Row 2: The estimated particles by DLF[1]. Row 3: The inferred particles by NeuroFluid.

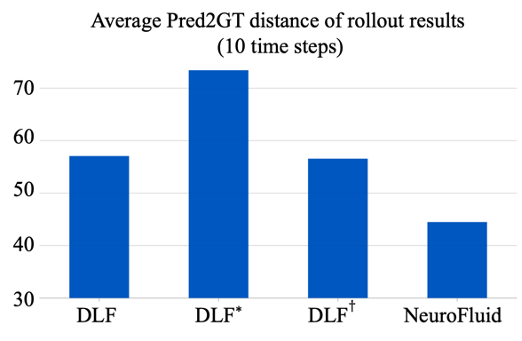

(2) Future prediction.

To assess whether the transition model has learned the intrinsic physical transition of fluid particles, Wang and his colleagues evaluate the predicted trajectories of the particles over 10 time steps into the future. Fig. 4 compares the predicted particle positions with the ground-truth particles (from a well-established non-learning-based fluid dynamics simulator). The results show that NeuroFluid can reasonably predict the future dynamics of fluids. The model has the ability to generalize to the time domain beyond the scope of visual observations.

Fig. 4 Prediction errors of particle positions over 10 time steps into the future. DLF*: Fine-tuning DLF on the data with similar physical properties to the evaluation benchmarks. : Directly fine-tuning DLF on the evaluation benchmarks.

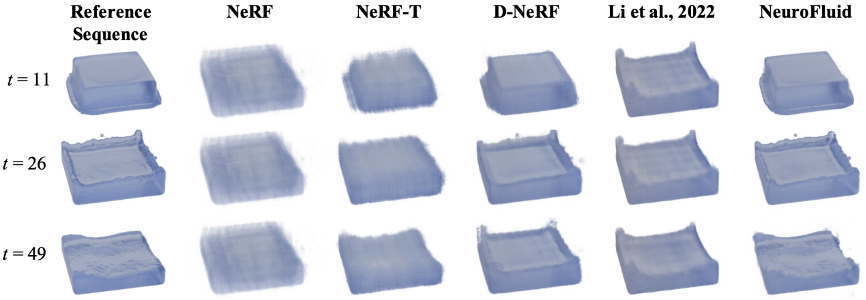

(3) Novel view synthesis.

To evaluate the performance of the renderer (PhysNeRF), the research group compare NeuroFluid with previous differentiable renderers, including NeRF[2], NeRF-T (using time step encoding), D-NeRF[3], and Li et al. (2022) [4]. The rendered images in Fig. 5 indicate that, by employing particle geometries, NeuroFluid not only captures the fluid dynamics but also preserves fine details of fluids (such as the droplets in the last column).

Fig. 5 Novel view synthesis for WaterCube. The first column indicates the reference sequential images from a novel view that are not visible during training.

(4) Generalization on the novel fluid scene.

One of our contributions is to correlate particle geometries (for dynamics) with neural radiance fields (for rendering). To verify that PhysNeRF learns particle-related information, Wang and his colleagues directly evaluate a well-trained PhysNeRF on a novel scene with a complicated initial shape (Stanford Bunny). As shown in Fig. 6, PhysNeRF finely renders the surface details without further fine-tuning on the particles of Stanford Bunny.

Fig. 6 The generalization ability of PhysNeRF to novel fluid scenes without further fine-tuning.

Reference:

[1] Ummenhofer, Benjamin, et al. Lagrangian fluid simulation with continuous convolutions. In ICLR, 2019.

[2] Mildenhall, Ben, et al. NeRF: Representing scenes as neural radiance fields for view synthesis. In ECCV,2020.

[3] Pumarola, Albert, et al. D-NeRF: Neural radiance fields for dynamic scenes. In CVPR, 2021.

[4] Li, Yunzhu, et al. 3D neural scene representations for visuomotor control. In CoRL, 2022.

[5] Sanchez-Gonzalez, Alvaro, et al. Learning to Simulate Complex Physics with Graph Networks. In ICML, 2020.

Authors: Shanyan Guan, Huayu Deng, Yunbo Wang, Xiaokang Yang.

Paper: arxiv.org/pdf/2203.01762.pdf

Code: github.com/syguan96/NeuroFluid

Project Page: syguan96.github.io/NeuroFluid/

-

Students

-

Faculty/Staff

-

Alumni

-

Vistors

-

Quick Links